This post continues from:

Backstage metrics flow:

Backstage (OpenTelemetry SDK) → OTLP/HTTP → Alloy → remote_write → Mimir → Grafana

We’ll build a Grafana dashboard using the Mimir datasource.

Lab context

- Namespace (Backstage):

backstage - Namespace (LGTM):

observability - Datasource: Mimir (Prometheus-compatible)

Prerequisites

Grafana → Explore → Mimir:

count({service_name="homelab-backstage"})Notes on OTLP export cadence

If your app exports metrics every ~60s (e.g. exportIntervalMillis: 60000), prefer [5m] windows for rate() and histograms.

- Create dashboard variables

Constant variable: svc

- Type: Constant

- Name:

svc - Value:

homelab-backstage

Query variables (multi + include all + custom all value .*)

All use: Query type = Label values, datasource = Mimir, metric = http_server_duration_milliseconds_count.

route(Label: Route), label =http_route, filter:service_name = $svcmethod(Label: Method), label =http_method, filter:service_name = $svcstatus(Label: Status), label =http_status_code, filter:service_name = $svc

- Top stats (Stat)

- Requests/sec (RPS)

sum(rate(http_server_duration_milliseconds_count{service_name="$svc"}[5m]))

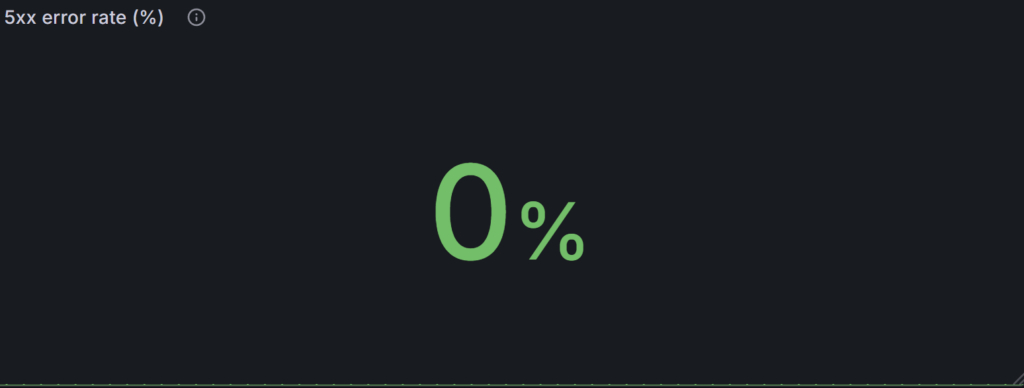

- 5xx error rate (%), zero-safe

100 * (

(sum(rate(http_server_duration_milliseconds_count{service_name="$svc", http_status_code=~"5.."}[5m])) or vector(0))

/

clamp_min((sum(rate(http_server_duration_milliseconds_count{service_name="$svc"}[5m])) or vector(0)), 1e-9)

)

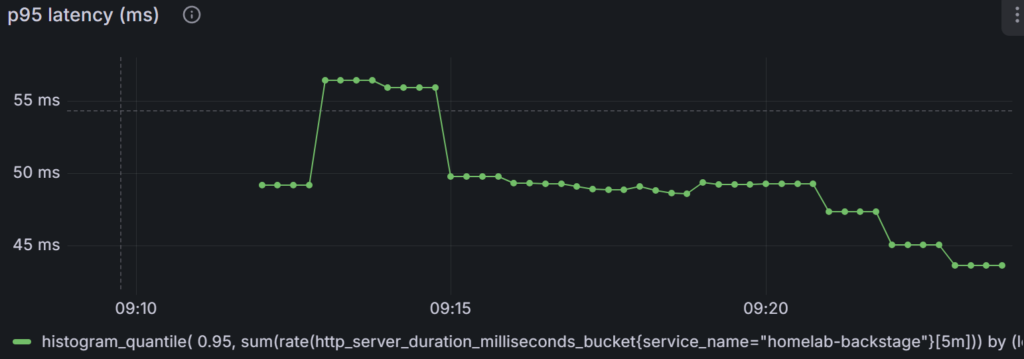

- p95 latency (ms)

histogram_quantile(

0.95,

sum(rate(http_server_duration_milliseconds_bucket{service_name="$svc"}[5m])) by (le)

)

- HTTP panels

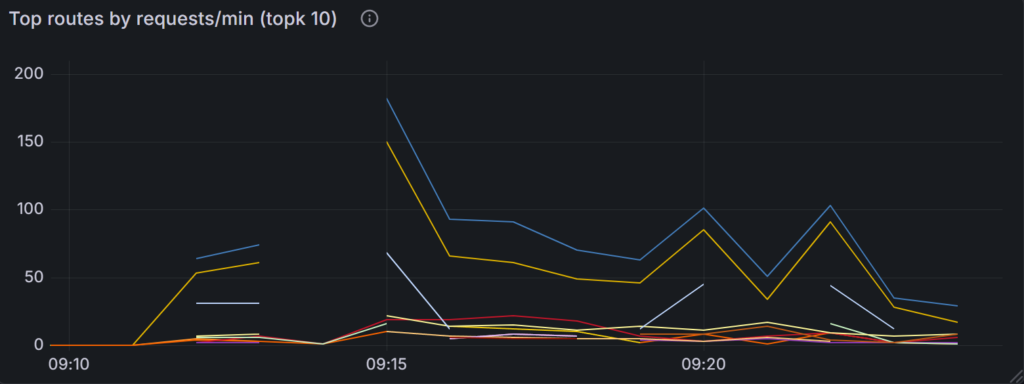

- Top routes by requests/min (topk 10)

Chart type: Time series (points or bars)

With sparse traffic you may see periodic spikes; that’s normal.

topk(10,

sum(increase(http_server_duration_milliseconds_count{service_name="$svc", http_route=~".+"}[2m]))

by (http_route)

) / 2

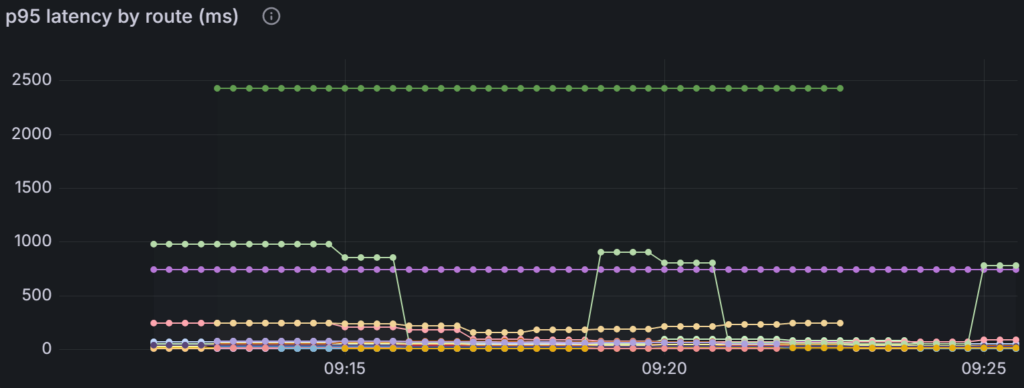

- p95 latency by route (ms)

Chart type: Time series (lines)

histogram_quantile(

0.95,

sum(rate(http_server_duration_milliseconds_bucket{service_name="$svc", http_route=~".+"}[5m]))

by (le, http_route)

)

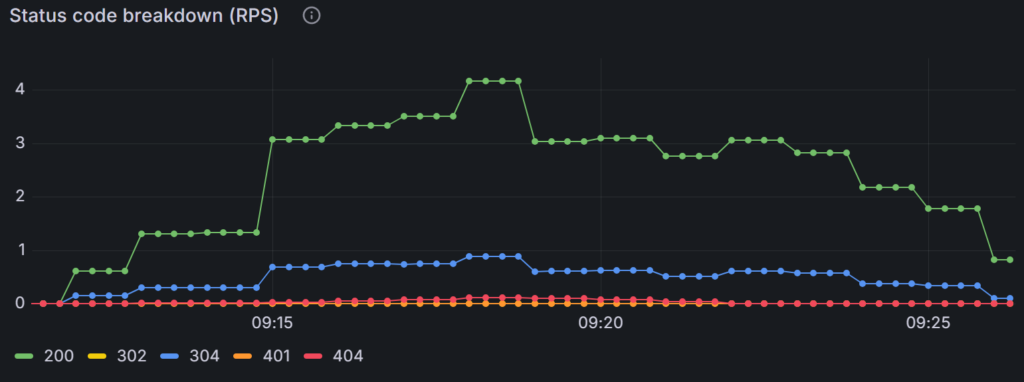

- Status code breakdown (RPS)

Chart type: Time series

sum(rate(http_server_duration_milliseconds_count{service_name="$svc"}[5m]))

by (http_status_code)

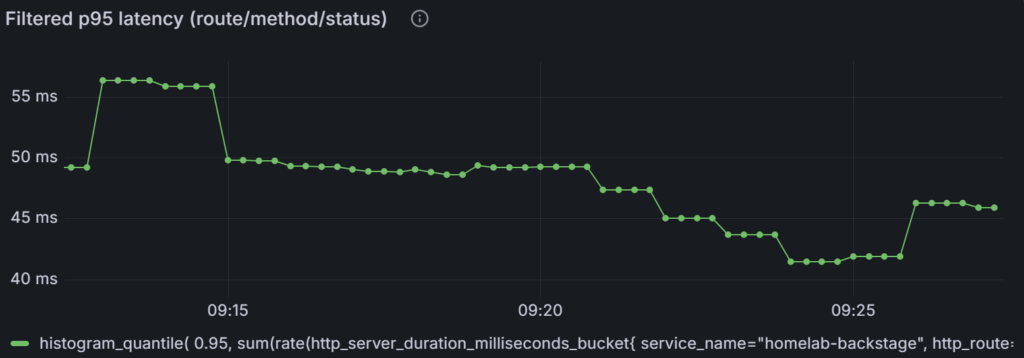

- Filtered p95 latency (route/method/status)

Chart type: Time series

histogram_quantile(

0.95,

sum(rate(http_server_duration_milliseconds_bucket{

service_name="$svc",

http_route=~"$route",

http_method=~"$method",

http_status_code=~"$status"

}[5m])) by (le)

)

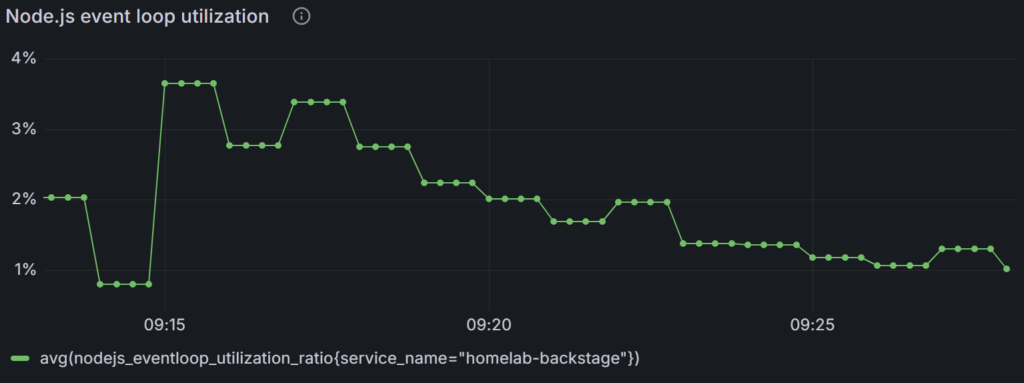

- Node.js / V8 runtime panels (Time series)

- Event loop utilization

avg(nodejs_eventloop_utilization_ratio{service_name="$svc"})

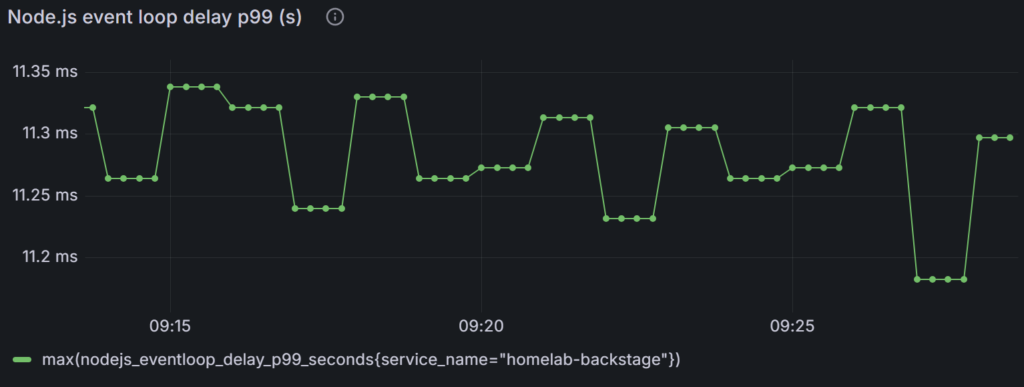

- Event loop delay p99 (s)

max(nodejs_eventloop_delay_p99_seconds{service_name="$svc"})

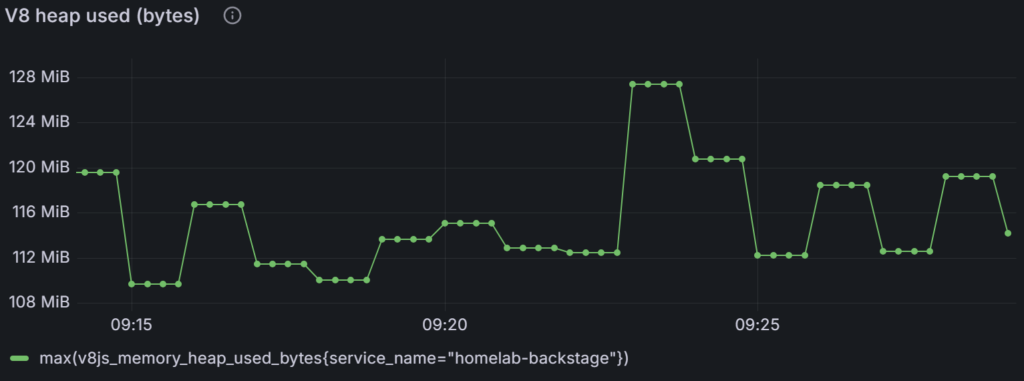

- V8 heap used (bytes)

max(v8js_memory_heap_used_bytes{service_name="$svc"})

- Backstage + DB panels (Time series)

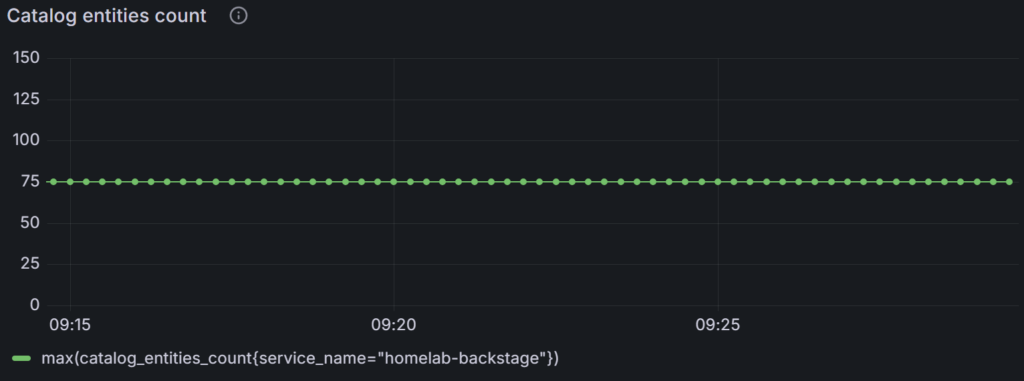

- Catalog entities count (if available)

max(catalog_entities_count{service_name="$svc"})

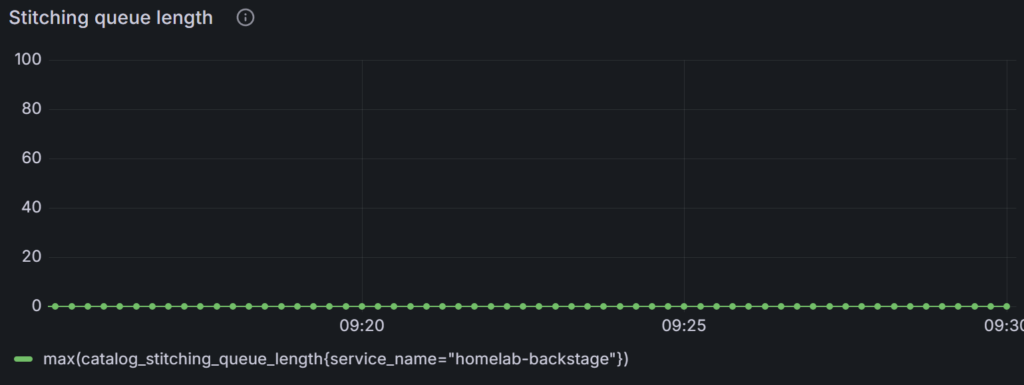

- Stitching queue length

max(catalog_stitching_queue_length{service_name="$svc"})

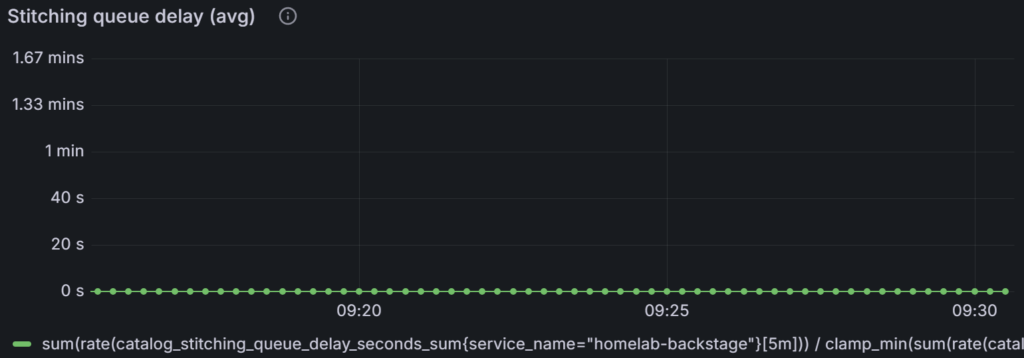

- Stitching queue delay (avg, s)

sum(rate(catalog_stitching_queue_delay_seconds_sum{service_name="$svc"}[5m]))

/

clamp_min(sum(rate(catalog_stitching_queue_delay_seconds_count{service_name="$svc"}[5m])), 1e-9)

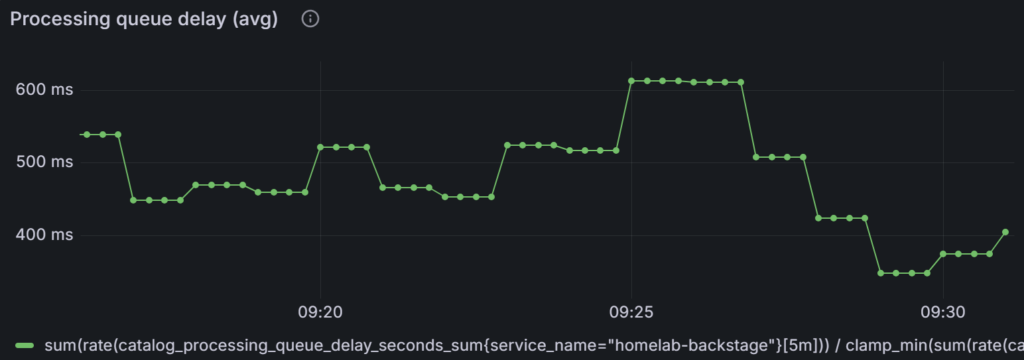

- Processing queue delay (avg, s)

sum(rate(catalog_processing_queue_delay_seconds_sum{service_name="$svc"}[5m]))

/

clamp_min(sum(rate(catalog_processing_queue_delay_seconds_count{service_name="$svc"}[5m])), 1e-9)

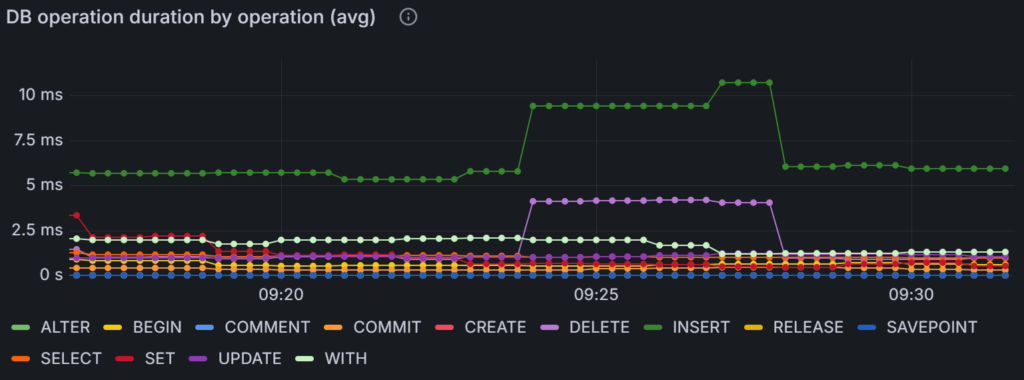

- DB operation duration by operation (avg, s)

sum(rate(db_client_operation_duration_seconds_sum{service_name="$svc"}[5m])) by (db_operation_name)

/

clamp_min(sum(rate(db_client_operation_duration_seconds_count{service_name="$svc"}[5m])) by (db_operation_name), 1e-9)

Did this guide save you time?

Support this site